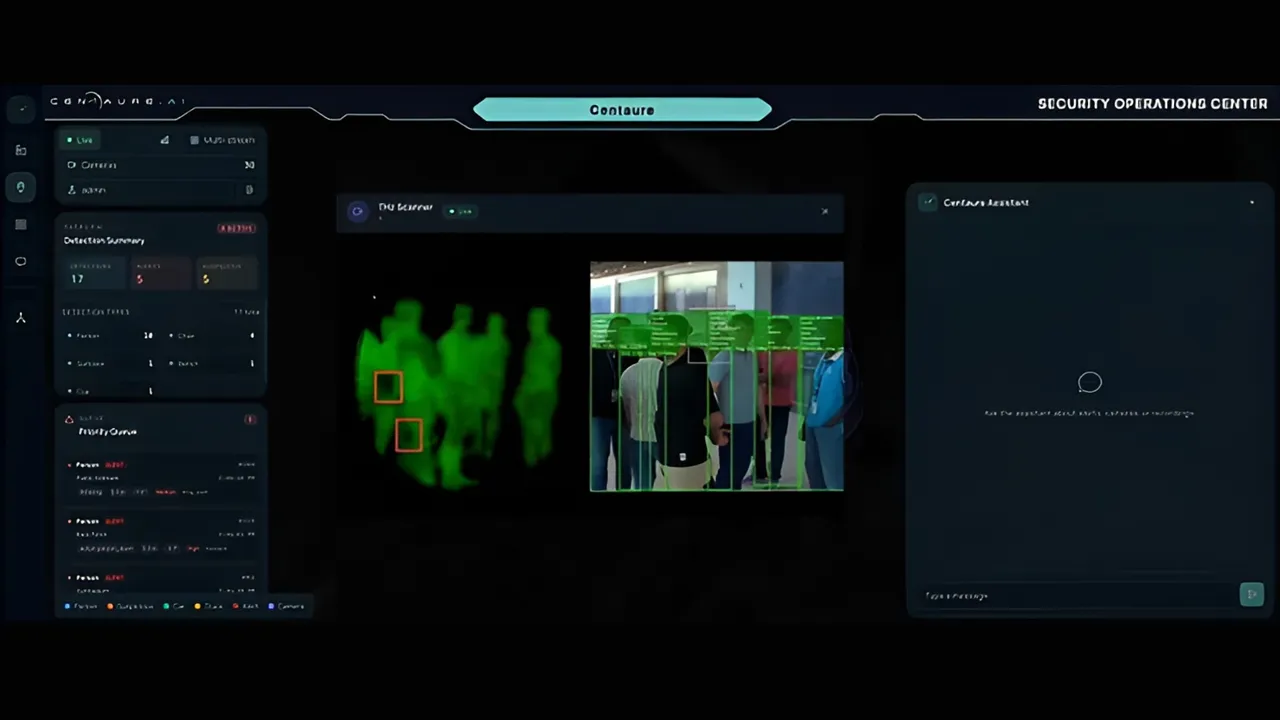

The Institute of Foundation Models at the Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) in the UAE, alongside UC San Diego, has introduced FastVideo, a real-time AI video generation system that outpaces current industry benchmarks. Producing 30 seconds of 1080p video in roughly five seconds, the new framework generates content faster than real-time playback, challenging established global players like OpenAI in the generative AI space.

Quick Facts

- Generates 30 seconds of 1080p video in five seconds.

- Operates 20 to 25 times faster than OpenAI’s Sora.

- Runs efficiently on a single GPU architecture.

Overcoming Computational Limits in Video Diffusion

Current AI video generation leaders face steep computational costs. OpenAI’s Sora requires one to two minutes to render a five-second 1080p clip. In contrast, FastVideo delivers the same five-second output in just 4.55 seconds using a single GPU.

This speed multiplier is driven by a trainable sparse attention mechanism built into FastVideo’s core. The architecture significantly lowers the compute requirements of video diffusion—the process AI models use to render frames—proving that high-resolution generative video does not have to be prohibitively expensive to run live.

The system is designed as an open, modular framework. It supports sparse distillation, full fine-tuning, and scalable training across up to 64 GPUs with near-linear performance gains. Recognizing its utility, NVIDIA has already integrated FastVideo as a supported backend on its Dynamo inference platform.

K2 Think and the Shift to Vibe Directing

Speed is only one part of the equation. FastVideo operates in tandem with MBZUAI’s reasoning language model, K2 Think (K2-V2). Instead of blindly executing a static prompt, K2 Think acts as an intelligent director, providing live reasoning and control during the video generation process.

To package this capability for creators, the research team launched Dreamverse, a prototype interface built on top of the FastVideo framework. Dreamverse introduces a concept the developers call “vibe directing.”

Rather than crafting a single, exhaustive text prompt, users guide the output through rapid, iterative natural language instructions. The interface allows creators to adjust camera angles, modify motion, swap backgrounds, and extend scenes across a chain of five-second clips instantly.

Implications for World Models and Simulation

Beyond creative workflows, real-time video generation unlocks critical progress in world model research. Most current AI models operate by predicting the next word or pixel. In contrast, frameworks like the PAN World Model aim to predict the next state of the physical world, integrating language, spatial data, video, and physical actions into a unified simulation.

Historically, the massive computational load of real-time generation has bottlenecked generalized world models. By enabling near-instant video rendering, FastVideo removes a major practical barrier, allowing AI systems to reason about cause and effect or test decisions in high-stakes simulations.

The technology also signals major shifts for commercial sectors, holding direct applications for video-on-demand, real-time media streaming, and dynamic video gaming environments where assets can be generated on the fly.

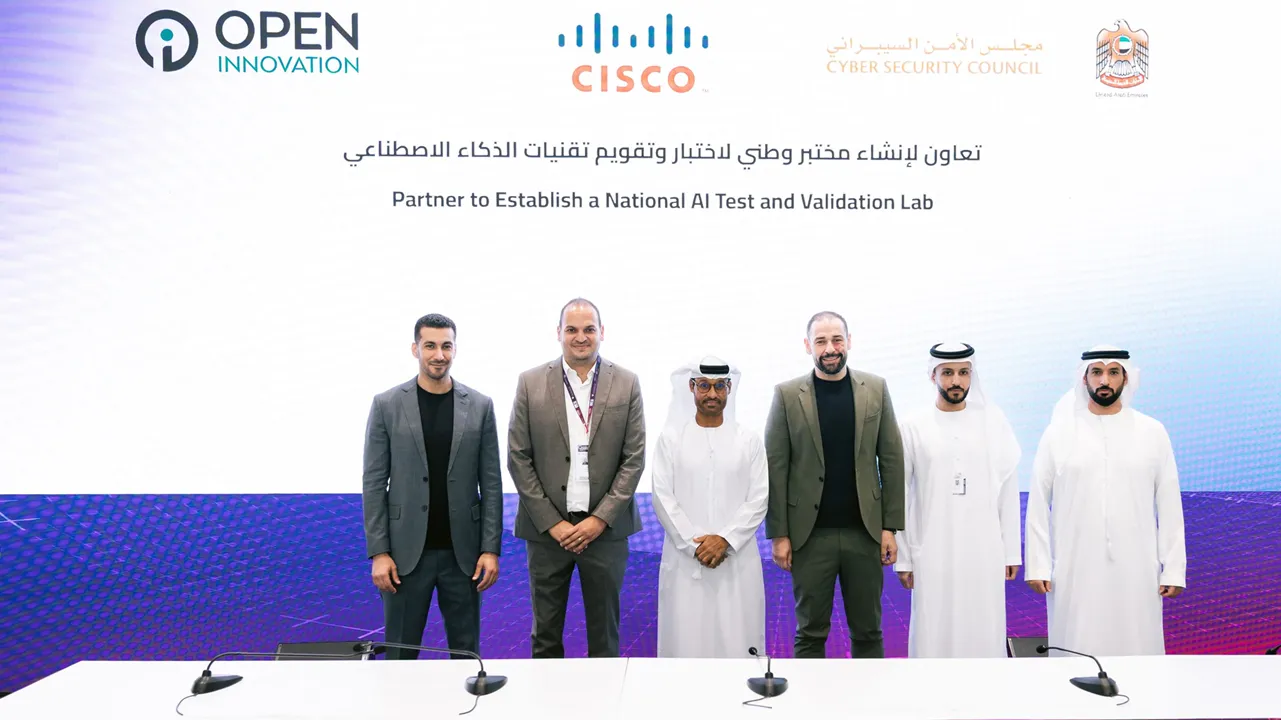

About MBZUAI Institute of Foundation Models

The Institute of Foundation Models (IFM) is a specialized research division within the Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) in Abu Dhabi, UAE. The institute focuses on developing advanced, open-source AI models and frameworks, including the K2-V2 foundation model built with support from NVIDIA, to advance general-purpose language capabilities and multimodal AI research.

Source: Middle East AI News